this post was submitted on 19 Oct 2024

289 points (99.0% liked)

Science Memes

10743 readers

3977 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

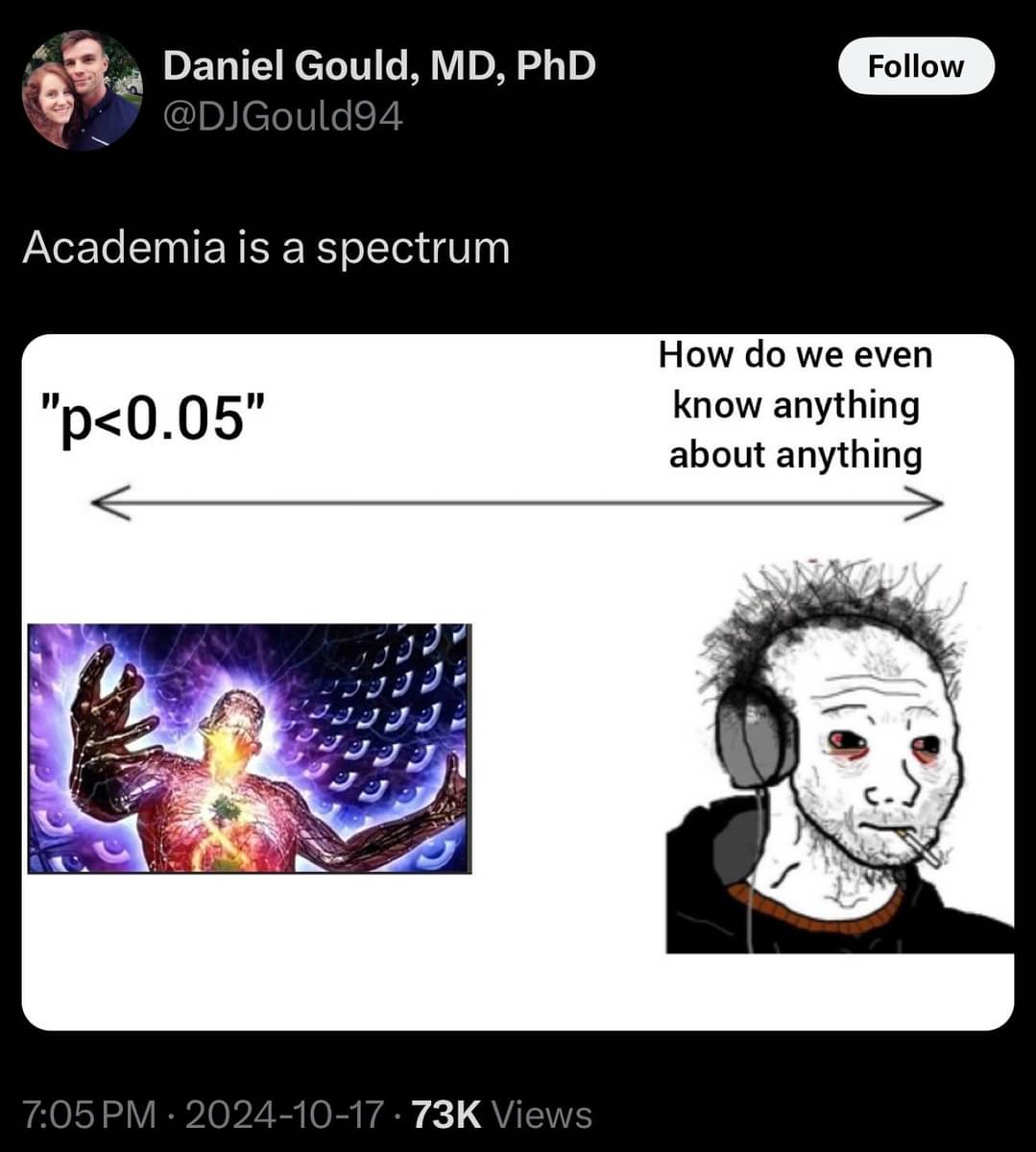

Frequentist statistics are really... silly in a way. And this coming from someone who has to teach it. Sure, p is less than 5%, but you sampled 100,000 people-- an effect size of 0.05 would be significant at this rate. "bUt ItS sIgNiFiCaNt"... Oy.

I get very suspicious if a paper samples multiple groups and still uses p. You would use q in that case, and the fact that they didn't suggests that nothing came up positive.

Still, in my opinion it's generally OK if they only use the screen as a starting point and do follow-up experiments afterwards

Yeah, I used to work in a field with huge samples so significance wasn't really all that useful. I usually just report significant coefficients and try to make clear what changes by model. For instance, if a type of curriculum showed improvements on test scores, you simply say how much and, possibly, illustrate it by saying if a person went from 50th percentile to 55th percentile.

Every field varies, though. I find it crazy how much psychologists I've worked with cared about r-squared. To each their own, I guess.